Tag: technology

‘A separate civilization of the AIs is in the process of developing’ — Dr Steven Wolfram on the merger of GPT with Wolfram Alpha

SARS-1 — Evidence of an Artificial Origin

As the world debates the origin of SARS-COV-2, most assume the SARS outbreak of 2003 was a natural event. But revisiting the evidence I found parallels, direct linkages and many unresolved questions.

To understand the origin of SARS-CoV-2 it’s illuminating to revisit the history of the first SARS outbreak and the subsequent investigation of its origin. SARS is assumed to be the result of a natural zoonosis by most scientists – including many who are open to an artificial origin of SARS-CoV-2. But the basis for this assumption may be unsound. There are many parallels between the two viruses both at the molecular level, and also in the epidemiology, pandemic management and origins tracing. Many of the same individuals and institutions play key roles in both. It may be that these outbreaks aren’t independent events, but the result of a long-term research program.

link

Of 738 machine learning researchers polled, 48% gave at least a 10% chance of an extremely bad outcome

I’m scared of AGI. It’s confusing how people can be so dismissive of the risks.

I’m an investor in two AGI companies and friends with dozens of researchers working at DeepMind, OpenAI, Anthropic, and Google Brain. Almost all of them are worried.

Imagine building a new type of nuclear reactor that will make free power.

People are excited, but half of nuclear engineers think there’s at least a 10% chance of an ‘extremely bad’ catastrophe, with safety engineers putting it over 30%.

That’s the situation with AGI. Of 738 machine learning researchers polled, 48% gave at least a 10% chance of an extremely bad outcome.

Of people working in AI safety, a poll of 44 people gave an average probability of about 30% for something terrible happening, with some going well over 50%.

Remember, Russian roulette is 17%.

Continue reading “Of 738 machine learning researchers polled, 48% gave at least a 10% chance of an extremely bad outcome”Eliezer Yudkowsky: Dangers of AI and the End of Human Civilization | Lex Fridman Podcast #368

This is a very long interview. Its main points could probably be made in about 12 minutes. Yudkowsky is a somewhat tortuous speaker who uses metaphors and analogies when direct description would work better. He spends a lot of time doing a sort of Socratic Q&A on Fridman, which was not interesting. The gist of what is said is AI as GPT-4 is already doing things that were not programmed into it and we humans do not understand how it is doing them. This is an early form of how AI will rather quickly learn to deceive us, doesn’t matter if it’s conscious or not. What must happen, or we face dire consequences, is AI must be aligned with human values to the extent that we have zero concerns about it self-aggrandizing and killing all of us. In 40 or 50 years, a group of smart physicists would be able to solve the alignment problem, according to Yudkowsky, but no one is working on that now and few want to slow down development of AI, which may become powerful enough to do massive damage fairly soon. I think this is a reasonable and serious concern and wonder only why an advanced AI would not know that killing all humans would also remove its energy supplies and maintenance crews. If this topic interests you and you like Yudkowsky and Fridman, the interview is fun to watch and I recommend it.

Another way to look at this, is it is all part of a large psyop which includes everything else that is going on in the world—covid, impending WW3, economic crash, digital currency, etc. From that perspective Yudkowsky and Fridman are either dupes or deep state actors aligned with forces that are working to take over the world. They will use AI as an excuse to crash the internet, possibly attack China and Russia, scare the hell out of everyone, maybe kill most of us, etc. When the dust settles top people will be in charge of an unbrave new world and those of us left standing will have nowhere else to go. In this context, notice Fridman’s expression when Yudkowsky describes human smarts that evolved from “outsmarting other people.” Looks like a tell but who knows.

Please notice that the fear we have of AI is that it will become a KOBK player. And the fear we have that AI is but part of a large psyop is the people doing that psyop are already KOBK players. What Yudkowsky fears about AI, we all should fear all the time about those who have power over us. My FIML partner is a strong proponent of the psyop interpretation and it is mainly her input that moved me to include it in these comments. ABN

Pausing AI Developments Isn’t Enough. We Need to Shut it All Down — Eliezer Yudkowsky

…To visualize a hostile superhuman AI, don’t imagine a lifeless book-smart thinker dwelling inside the internet and sending ill-intentioned emails. Visualize an entire alien civilization, thinking at millions of times human speeds, initially confined to computers—in a world of creatures that are, from its perspective, very stupid and very slow. A sufficiently intelligent AI won’t stay confined to computers for long. In today’s world you can email DNA strings to laboratories that will produce proteins on demand, allowing an AI initially confined to the internet to build artificial life forms or bootstrap straight to postbiological molecular manufacturing.

If somebody builds a too-powerful AI, under present conditions, I expect that every single member of the human species and all biological life on Earth dies shortly thereafter.

There’s no proposed plan for how we could do any such thing and survive. OpenAI’s openly declared intention is to make some future AI do our AI alignment homework. Just hearing that this is the plan ought to be enough to get any sensible person to panic. The other leading AI lab, DeepMind, has no plan at all.

link

The problem with AI is a gigantic version of the problems all humans face in interpersonal relations—trust. ABN

Zoomers and computers both need ethical communication or both are doomed

I just read a descriptive analysis of zoomers that seems pretty good to me. Assuming there is some truth in it, zoomers can be defined as entirely non-FIML. From a FIML point of view this constitutes unknowing abandonment of our most wonderful talents due mainly to not knowing they are possible.

Sadly, this largely defines all generations that have ever lived. Zoomers are novelties only in that they see no way out of earthly illusions including even caring about finding a way out. In some ways, it has ever been thus, Samuel Beckett on a warm beach, where the sun still shines on the nothing new.

The better way to go is bring the full power of your human voice and ears to every moment. Let nothing pass you by. Then you can do still nothing while also accomplishing something. The illusions are solipsisms and tautologies but that’s all. No reason to be cucked by them.

The core problem with GPT is it can’t be trusted. GPT is an even purer form of non-FIML than zoomers. GPT is potentially pure KOBK. Both of these fundamental problems illustrate that the most important human endeavor is morality, ethics. Systems in computers or in human brains don’t work optimally without ethics. In this discussion, that becomes abundantly clear. ABN

‘Pause Giant AI Experiments’ – Letter Breakdown w/ Research Papers, Altman, Sutskever and more

This is an excellent overview of the dangers of AI and why we should take a short break instead of rushing ahead. At heart, this is a a moral issue. ABN

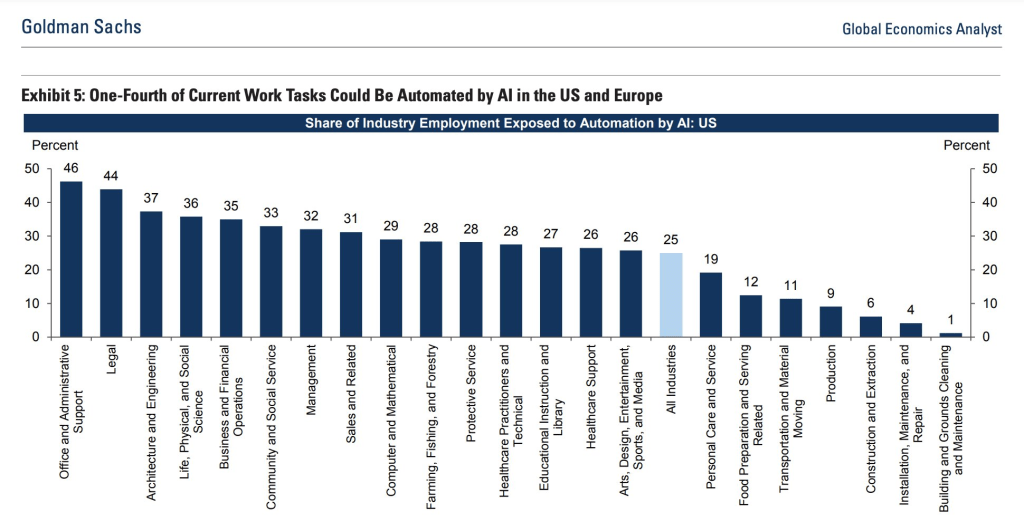

How AI will affect employment

‘It’s a dangerous race that no one can predict or control’: Elon Musk, Apple co-founder Steve Wozniak and 1,000 other tech leaders call for pause on AI development which poses a ‘profound risk to society and humanity’

Elon Musk and 1,000 other technology leaders including Apple co-founder Steve Wozniak are calling for a pause on the ‘dangerous race’ to develop AI, which they fear poses a ‘profound risk to society and humanity’ and could have ‘catastrophic’ effects.

In an open letter on The Future of Life Institute, Musk and the others argued that humankind doesn’t yet know the full scope of the risk involved in advancing the technology.

They are asking all AI labs to stop developing their products for at least six months while more risk assessment is done.

If any labs refuse, they want governments to ‘step in’. Musk’s fear is that the technology will become so advanced, that it will no longer require – or listen to – human interference.

It is a fear that is widely held and even acknowledged by the CEO of AI – the company that created ChatGPT – who said earlier this month that the tech could be developed and harnessed to commit ‘widespread’ cyberattacks.

link

Like a fast-growing Covid variant, AI will become the dominant source of knowledge simply by virtue of growth ~ Peter Nixey

I’m in the top 2% of users on StackOverflow. My content there has been viewed by over 1.7M people. And it’s unlikely I’ll ever write anything there again. Which may be a much bigger problem than it seems. Because it may be the canary in the mine of our collective knowledge. A canary that signals a change in the airflow of knowledge: from human-human via machine, to human-machine only.

Don’t pass human, don’t collect 200 virtual internet points along the way. StackOverflow is *the* repository for programming Q&A. It has 100M users & saves man-years of time & wig-factories-worth of grey hair every single day. It is driven by people like me who ask questions that other developers answer.

Or vice-versa. Over 10 years I’ve asked 217 questions & answered 77. Those questions have been read by millions of developers & had tens of millions of views. But since GPT4 it looks less & less likely any of that will happen; at least for me. Which will be bad for StackOverflow. But if I’m representative of other knowledge-workers then it presents a larger & more alarming problem for us as humans.

What happens when we stop pooling our knowledge with each other & instead pour it straight into The Machine? Where will our libraries be? How can we avoid total dependency on The Machine? What content do we even feed the next version of The Machine to train on? When it comes time to train GPTx it risks drinking from a dry riverbed. Because programmers won’t be asking many questions on StackOverflow. GPT4 will have answered them in private.

So while GPT4 was trained on all of the questions asked before 2021 what will GPT6 train on? This raises a more profound question. If this pattern replicates elsewhere & the direction of our collective knowledge alters from outward to humanity to inward into the machine then we are dependent on it in a way that supercedes all of our prior machine-dependencies. Whether or not it “wants” to take over, the change in the nature of where information goes will mean that it takes over by default.

Like a fast-growing Covid variant, AI will become the dominant source of knowledge simply by virtue of growth. If we take the example of StackOverflow, that pool of human knowledge that used to belong to us – may be reduced down to a mere weighting inside the transformer. Or, perhaps even more alarmingly, if we trust that the current GPT doesn’t learn from its inputs, it may be lost altogether. Because if it doesn’t remember what we talk about & we don’t share it then where does the knowledge even go?

We already have an irreversible dependency on machines to store our knowledge. But at least we control it. We can extract it, duplicate it, go & store it in a vault in the Arctic (as Github has done). So what happens next? I don’t know, I only have questions. None of which you’ll find on StackOverflow.

link

Excellent thread detailing history of OpenAI from its ‘widely democratized’ beginning to the politically biased, for-profit entity it has become now

#1 @elonmusk tells @sama in 2016, “we must have democratization of AI technology and make it widely available, and that’s the reason you, me, & the rest of the team created OpenAI was to help spread out AI technology, so it doesn’t get concentrated in the hands of a few.”

#2 @sama says that he started OpenAI with @elonmusk in 2016, “It’s a non-profit, and the goal is to build general super AI for the benefit of humanity and try to do that in a way where it is not a single for-profit company with a single AI that controls the world and hopefully…

Continue reading “Excellent thread detailing history of OpenAI from its ‘widely democratized’ beginning to the politically biased, for-profit entity it has become now”Researchers from Stanford and Google have created a new phase of matter, known as a time crystal

There is a huge global effort to engineer a computer capable of harnessing the power of quantum physics to carry out computations of unprecedented complexity. While formidable technological obstacles still stand in the way of creating such a quantum computer, today’s early prototypes are still capable of remarkable feats.

For example, the creation of a new phase of matter called a “time crystal.” Just as a crystal’s structure repeats in space, a time crystal repeats in time and, importantly, does so infinitely and without any further input of energy – like a clock that runs forever without any batteries. The quest to realize this phase of matter has been a longstanding challenge in theory and experiment – one that has now finally come to fruition.

“The big picture is that we are taking the devices that are meant to be the quantum computers of the future and thinking of them as complex quantum systems in their own right,” said Matteo Ippoliti, a postdoctoral scholar at Stanford and co-lead author of the work. “Instead of computation, we’re putting the computer to work as a new experimental platform to realize and detect new phases of matter.”

“Time-crystals are a striking example of a new type of non-equilibrium quantum phase of matter,” said Vedika Khemani, assistant professor of physics at Stanford and a senior author of the paper. “While much of our understanding of condensed matter physics is based on equilibrium systems, these new quantum devices are providing us a fascinating window into new non-equilibrium regimes in many-body physics.”

link

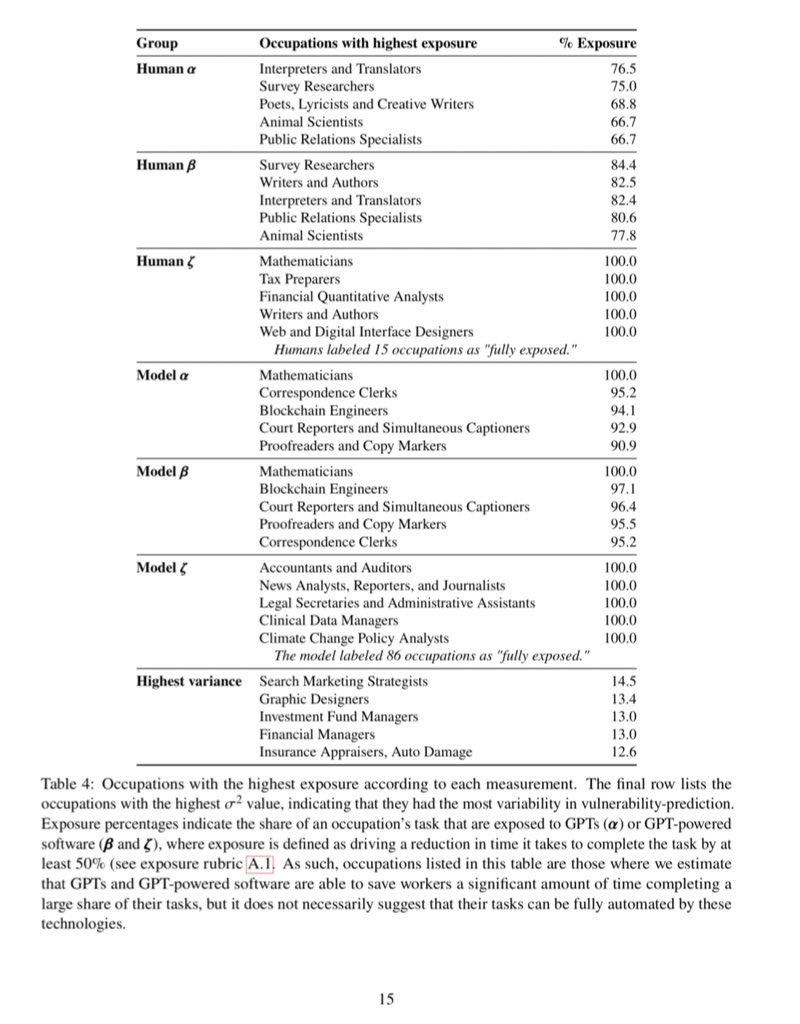

Occupations most at risk to GPT-powered software

As a former translator whose business model was overwhelmed by machine translation, I believe people in the above categories may be able to enjoy being made obsolete. I know I did. Time makes us all obsolete eventually, so seeing it happen while you are still active can be quite beautiful. Attachment is no longer possible and your understanding of what is happening is top flight. ABN